Description

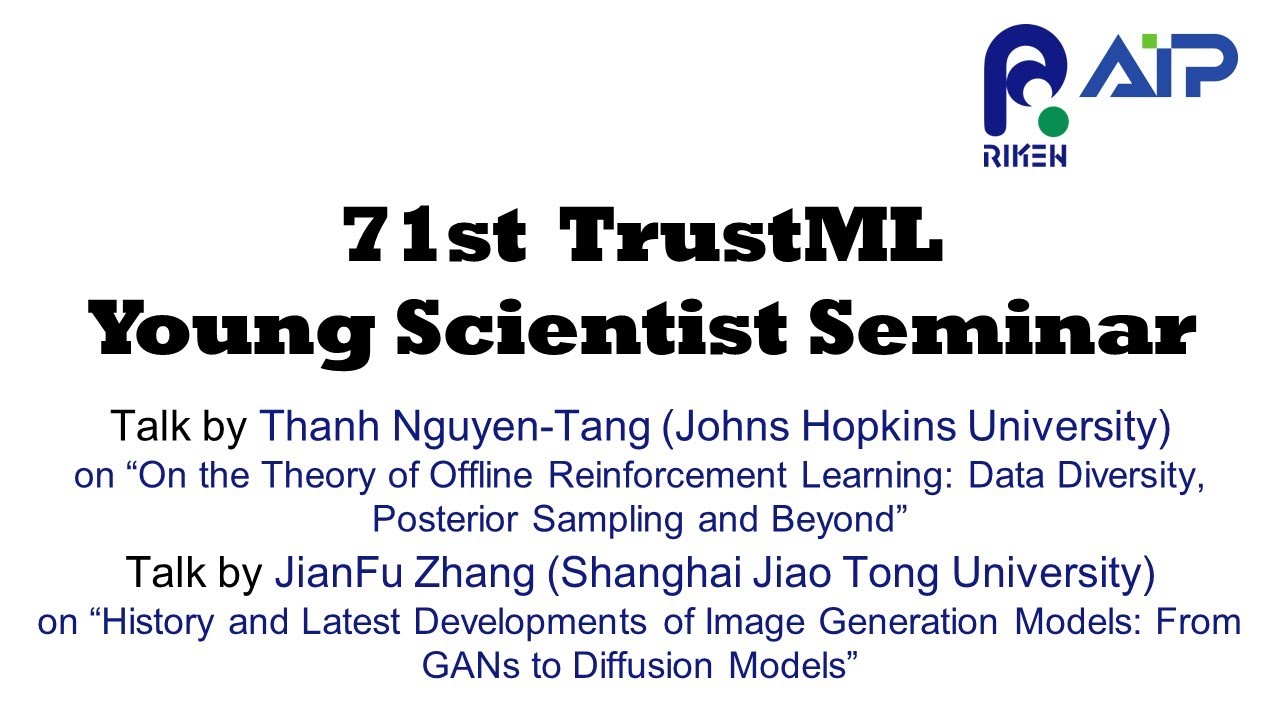

The 71st Seminar

Date and Time: August 2nd 10:00 am – 12:00 pm(JST)

Venue: Zoom webinar

Language: English

10:00 am – 11:00 am(JST)

Speaker 1: Thanh Nguyen-Tang (Johns Hopkins University)

Title 1: On the Theory of Offline Reinforcement Learning: Data Diversity, Posterior Sampling and Beyond

11:00 am – 12:00 pm(JST)

Speaker 2: JianFu Zhang (Shanghai Jiao Tong University)

Title 2: History and Latest Developments of Image Generation Models: From GANs to Diffusion Models

Short Abstract 1:

We seek to understand what empowers sample-efficient learning from historical datasets for sequential decision-making, typically known as offline reinforcement learning (RL), in the context of (value) function approximation and which algorithms guarantee sample efficiency. In this paper, we extend our understanding of these important questions by (i) proposing a notion of data diversity that subsumes the previous notions of coverage measures in offline RL and (ii) using this notion to study three distinct classes of offline RL algorithms that are based on version spaces (VS), regularized optimization (RO), and posterior sampling (PS). We establish that VS-based, RO-based, and PS-based algorithms, under standard assumptions, achieve comparable sample efficiency, which recovers the state-of-the-art bounds when specializing in the finite function class case and linear model case. This is quite surprising, given the prior work showed an unfavourable sample complexity of the RO-based algorithm as compared to the VS-based algorithm, whereas PS was rarely considered in offline RL due to its explorative nature. Notably, the considered (model-free) PS-based algorithm is a novel method we propose.

Bio 1:

Thanh Nguyen-Tang is a postdoctoral research fellow at the Department of Computer Science, Johns Hopkins University, US. His research focus is on characterizing the statistical and computational aspects of machine learning with the main topics including reinforcement learning, robust machine learning and transfer learning. He finished his PhD at Deakin University, Australia in 2022.

Short Abstract 2:

This presentation aims to provide scholars and researchers with a comprehensive overview of image generation models, covering their history and latest developments, with a primary focus on Generative Adversarial Networks (GANs) and Diffusion Models. Firstly, we will introduce the fundamental principles and limitations of GANs, exploring their applications in image restoration, image synthesis, style transfer, and other areas. Secondly, we will delve into the principles, advantages, and limitations of the emerging Diffusion Model, and perform a comparative analysis with GANs. Finally, we will discuss potential future research directions and promising application areas for image generation models.

Bio 2:

Jianfu Zhang joined the Qingyuan Research Institute of Shanghai Jiao Tong University as a tenure-track assistant professor in February 2023, focusing on generated content and trustworthy AI models. He has an impressive publication record with over twenty papers in top AI conferences and journals. Additionally, he holds the position of visiting scientist at RIKEN AIP. Jianfu earned his Ph.D. in Computer Science from the Department of Computer Science and Engineering at Shanghai Jiao Tong University, China, in 2020. Prior to that, he received a bachelor’s degree in ACM Honored Class from Zhiyuan College, Shanghai Jiao Tong University, China, in 2015. He has been recognized with prestigious awards such as the China National Scholarship and the “Yuanqing Yang Education Fund” Fellowship.